AI THERAPIST

A hyper-personalized AI therapist experience for emotional balance and companion.

Year :

2024

Industry :

Healthcare

Client :

Platzi Course

Strategic Challenge

How might we use AI to make mental-health support more human, accessible, and stigma-free?

Nearly 1 billion people live with diagnosable mental disorders, yet the majority cannot access care; due to cost, cultural stigma, or lack of availability (World Health Organization, 2023).

People often choose silence over seeking help, fearing judgment or discrimination.

At the same time, AI and voice technology were evolving into tools capable of empathy simulation, opening a new path for emotional accessibility.

Equilibrio was born to explore this intersection:

Can AI reduce anxiety instead of causing it?

Can design make therapy feel safe, not synthetic?

The challenge wasn’t only to build a “chatbot therapist”, but to design a trust-centered emotional interface that enables reflection, comfort, and continuity.

Critical Design Decisions

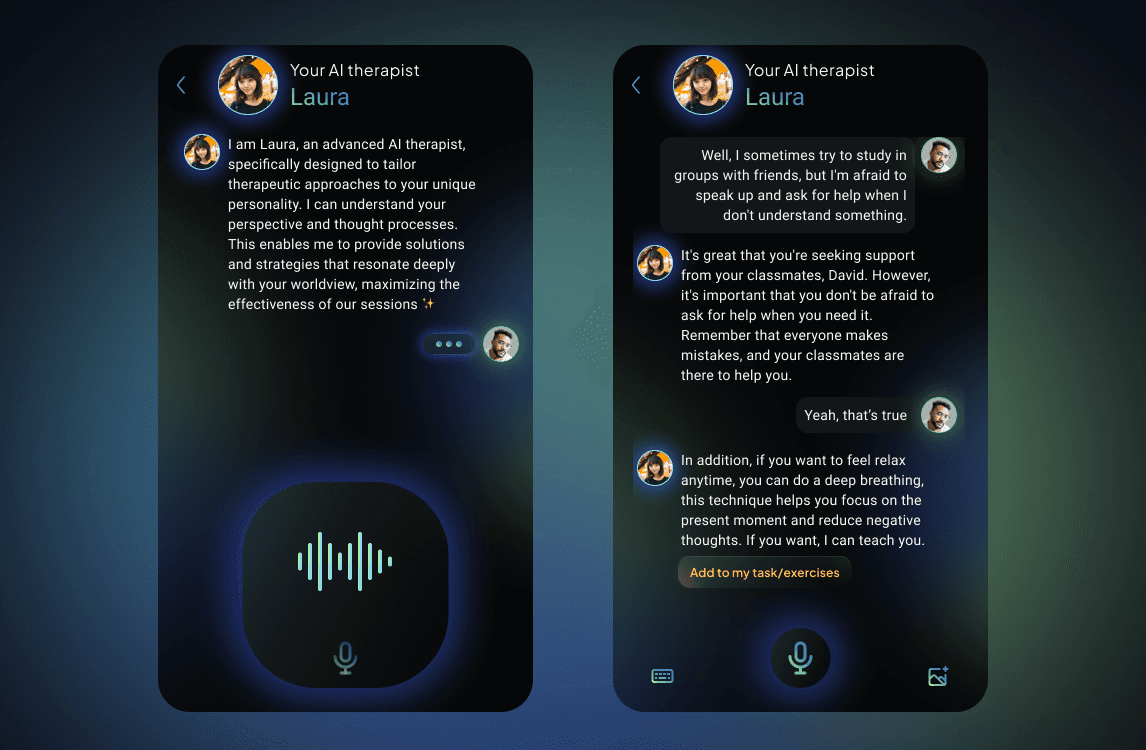

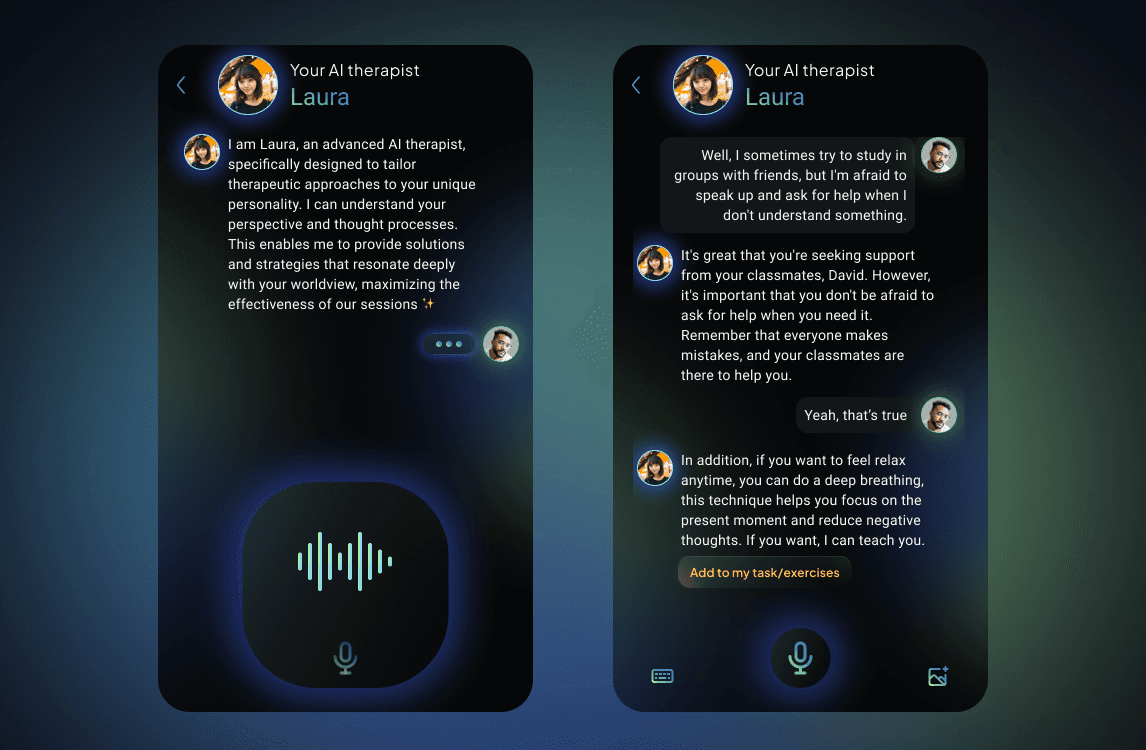

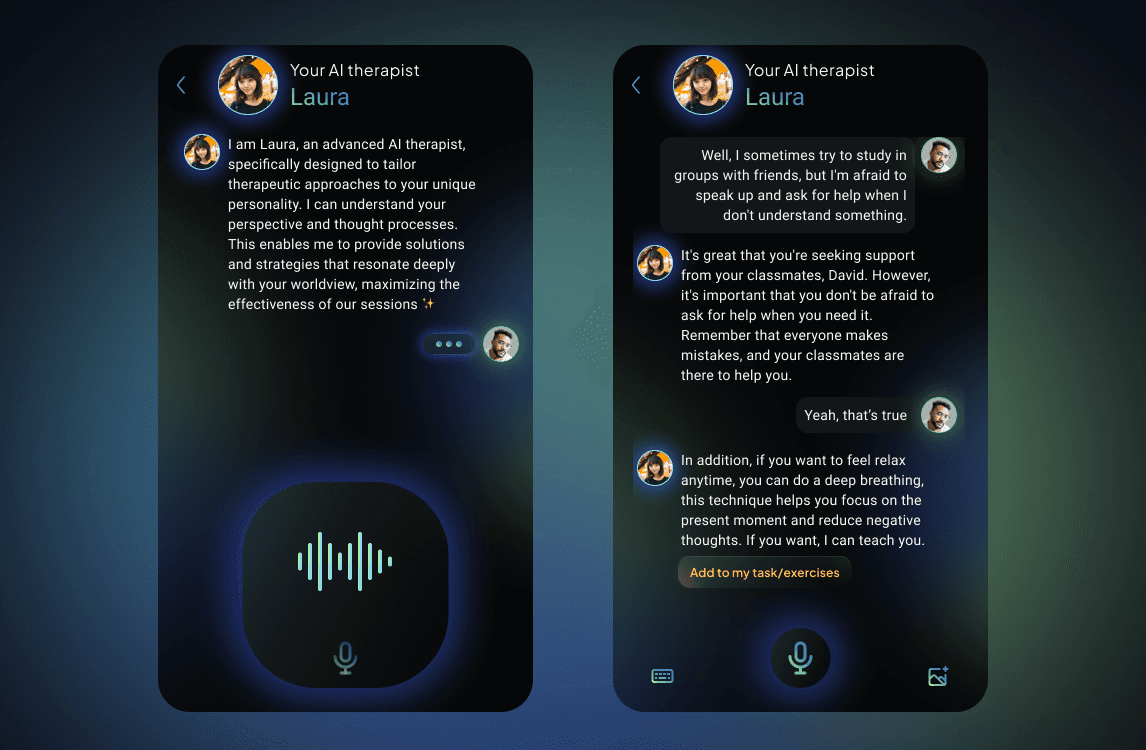

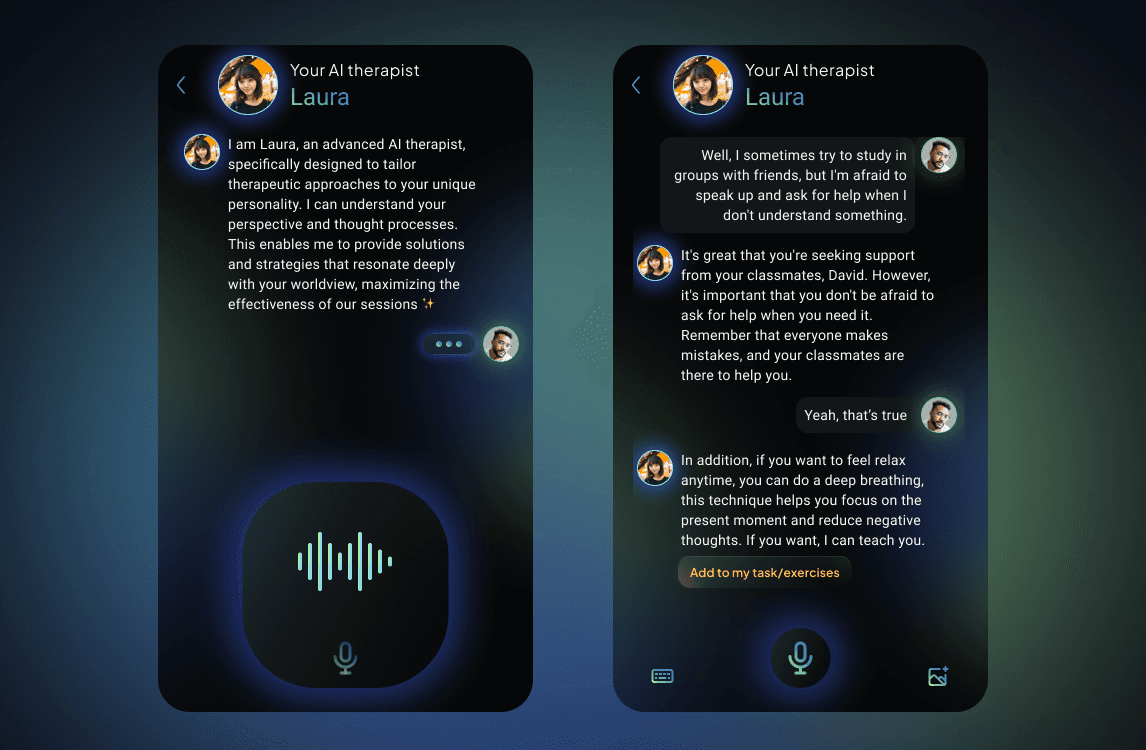

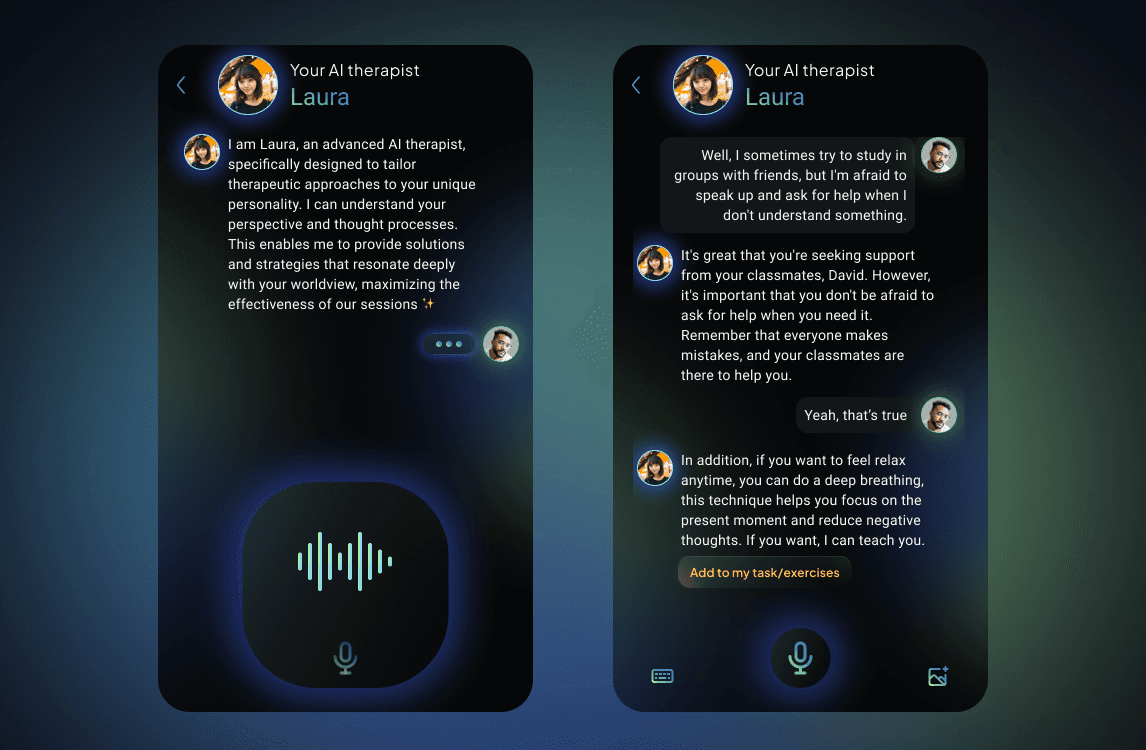

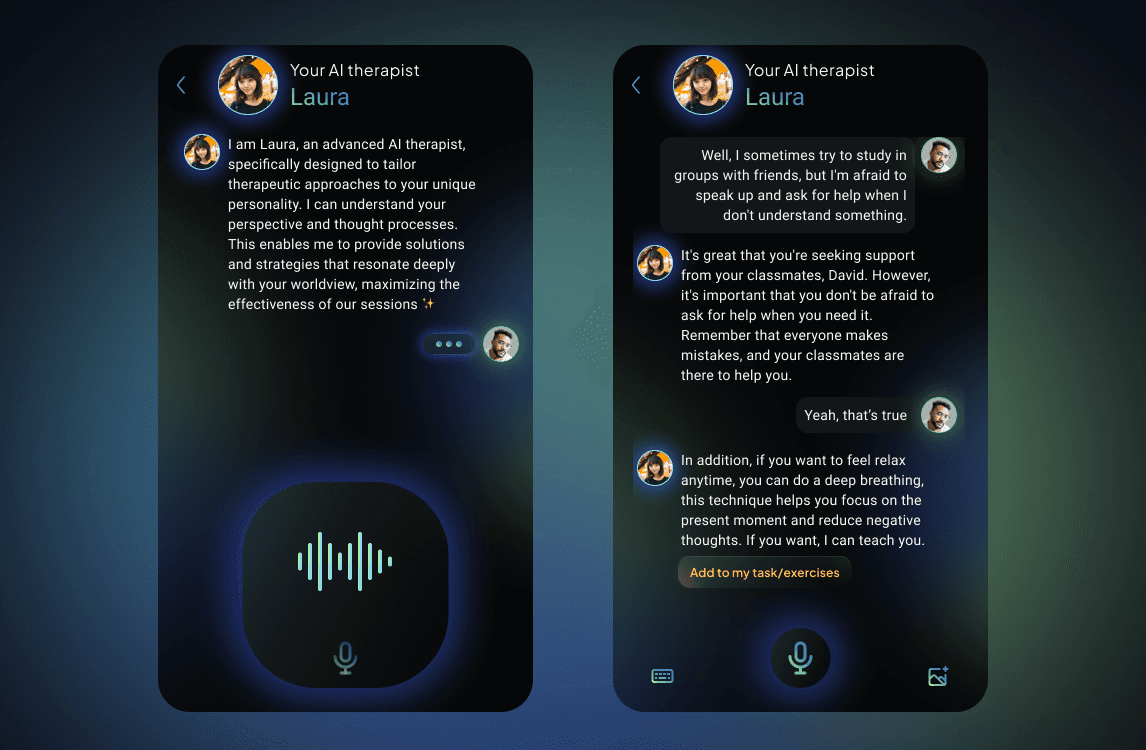

💬 Decision 1 — Voice-First Interaction for Emotional Openness

Instead of typing, users express themselves by voice.

Rationale: Speaking freely reduces stress and enables emotional nuance.

Impact: The voice interface mimics human dialogue, allowing micro-pauses, empathy markers, and tone analysis to personalize the response depth.

Outcome: Users felt more “heard” and less “judged,” according to qualitative validation.

(Visual reference: Chat & voice input screens.)

❤️🩹 Decision 2 — Emotional Design Framework (Norman’s Model)

The UI was structured around the three emotional layers — visceral, behavioral, and reflective — ensuring delight and comfort from first interaction to memory.

Visceral: soft gradients and pastel color palette to evoke calm and safety.

Behavioral: high readability, intuitive flows, and visible progress to generate a sense of control.

Reflective: post-session feedback reinforcing progress and self-compassion.

(Visual reference: onboarding & session summary screens.)

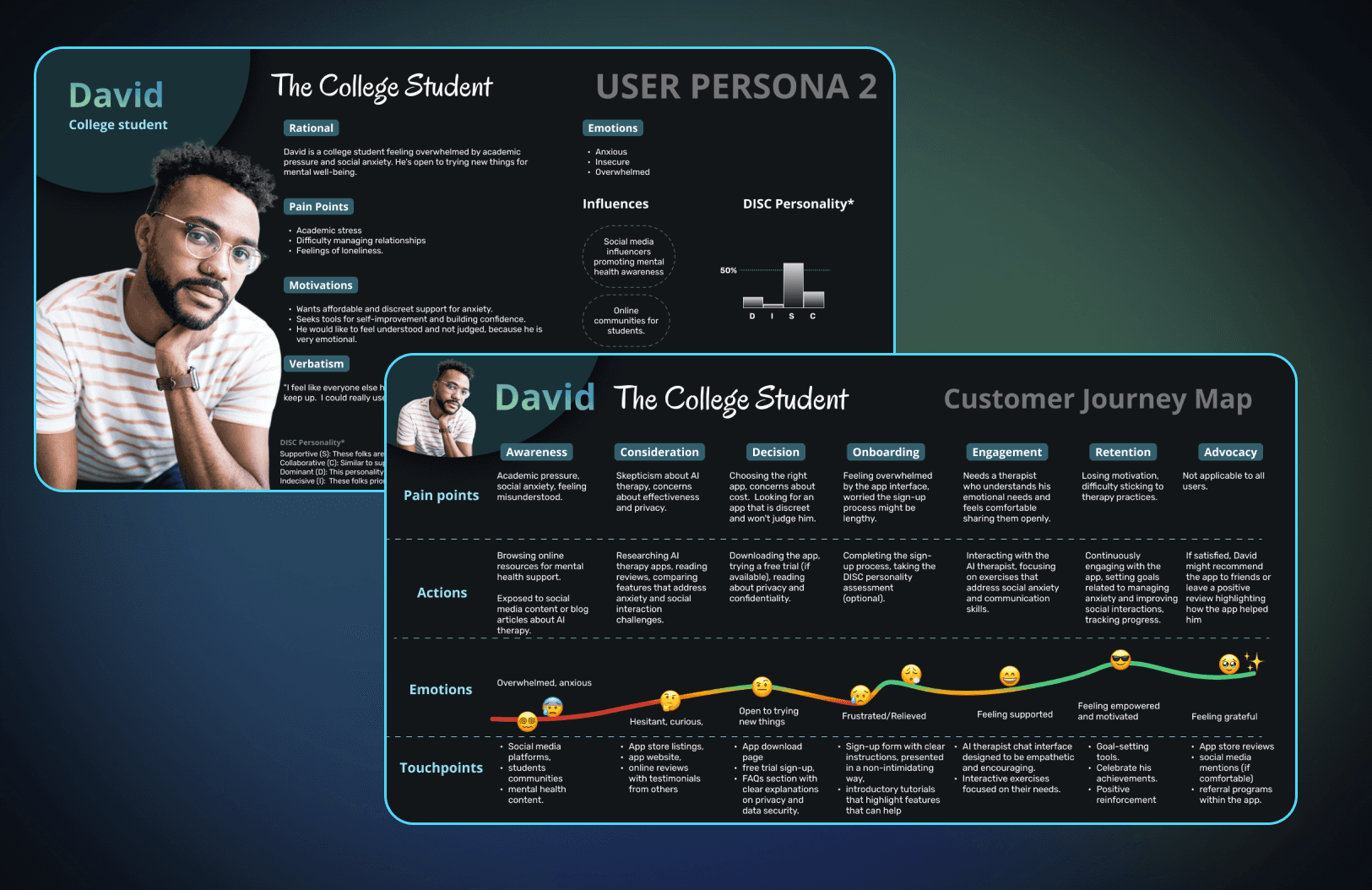

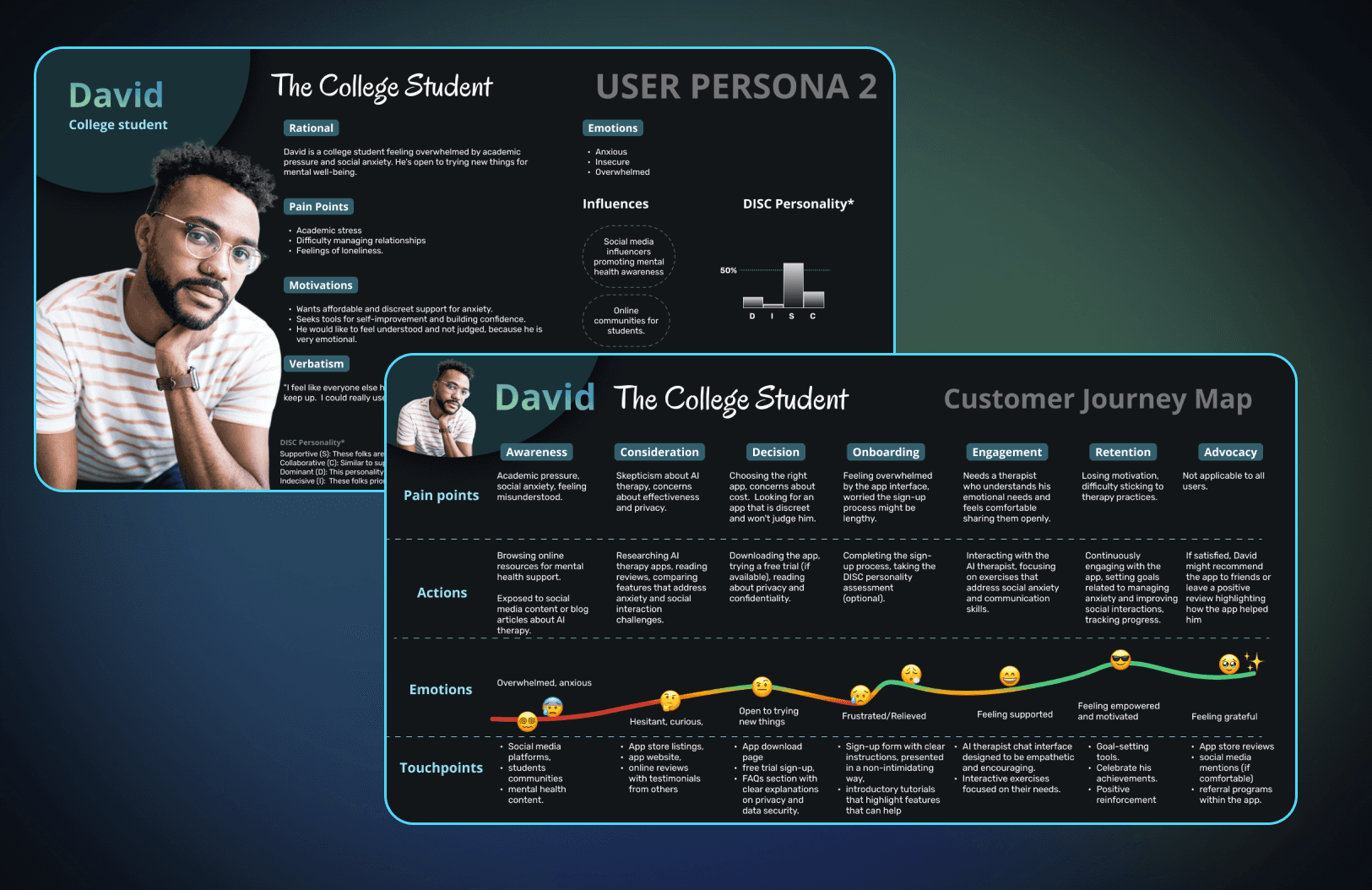

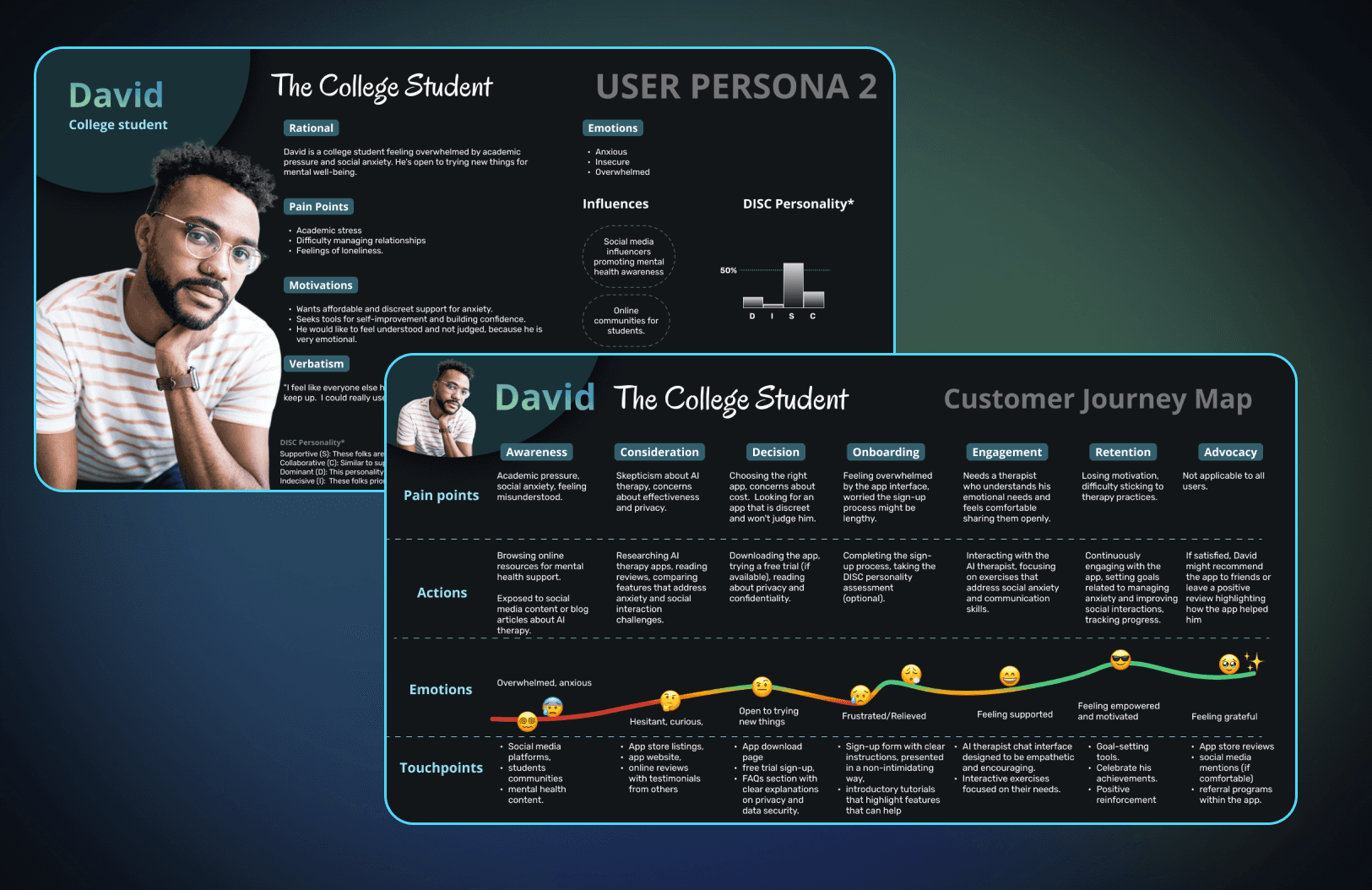

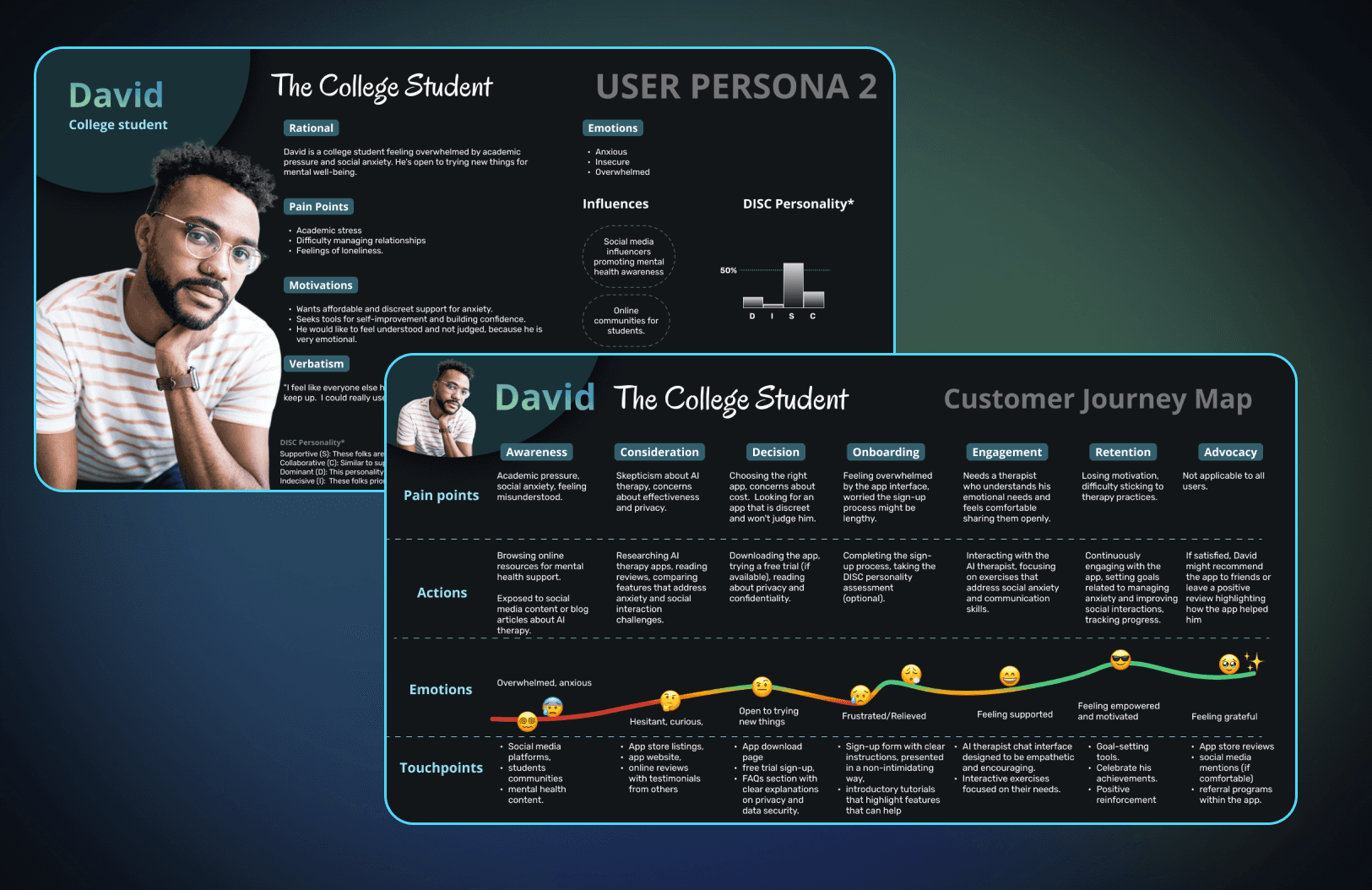

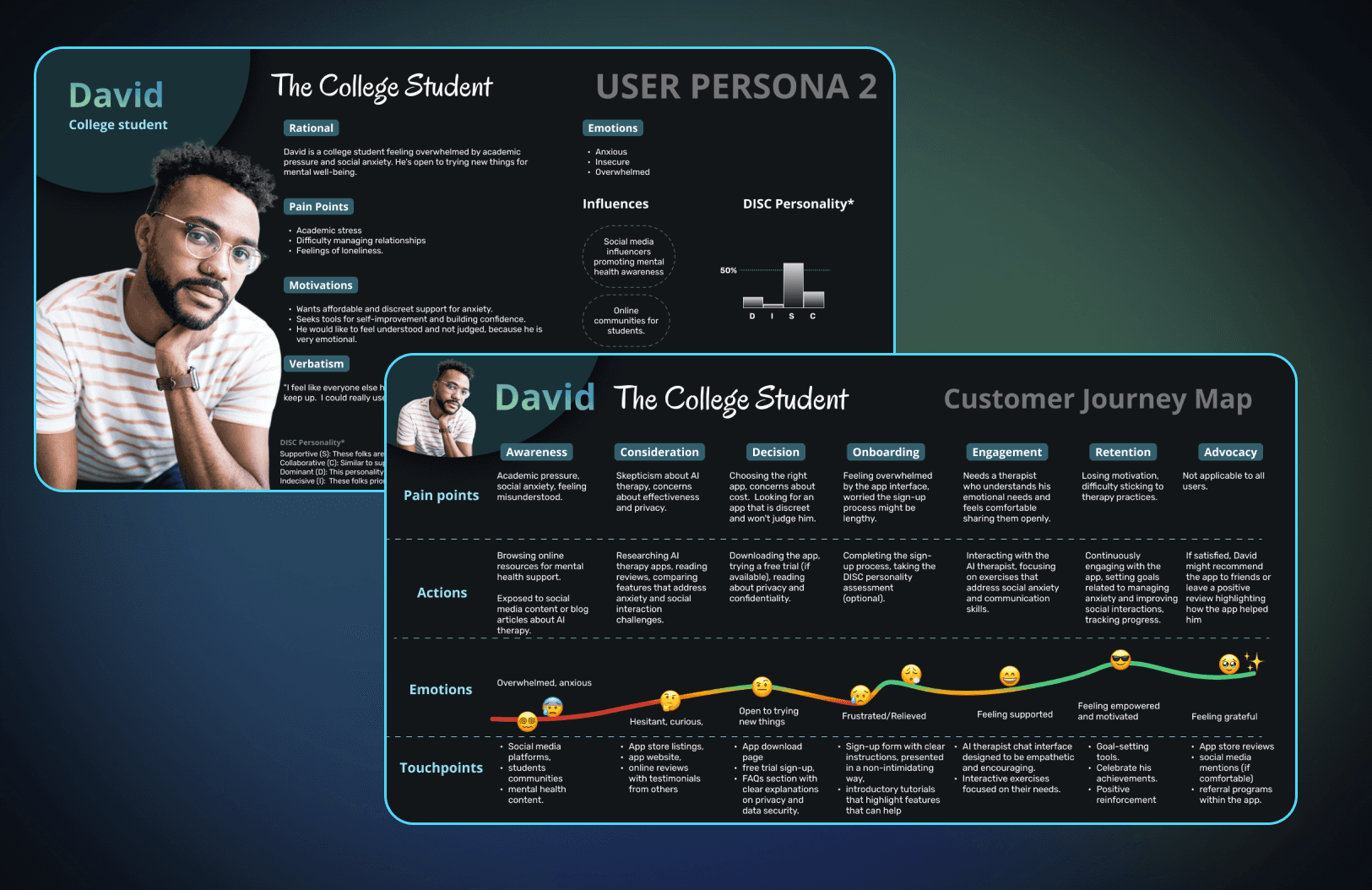

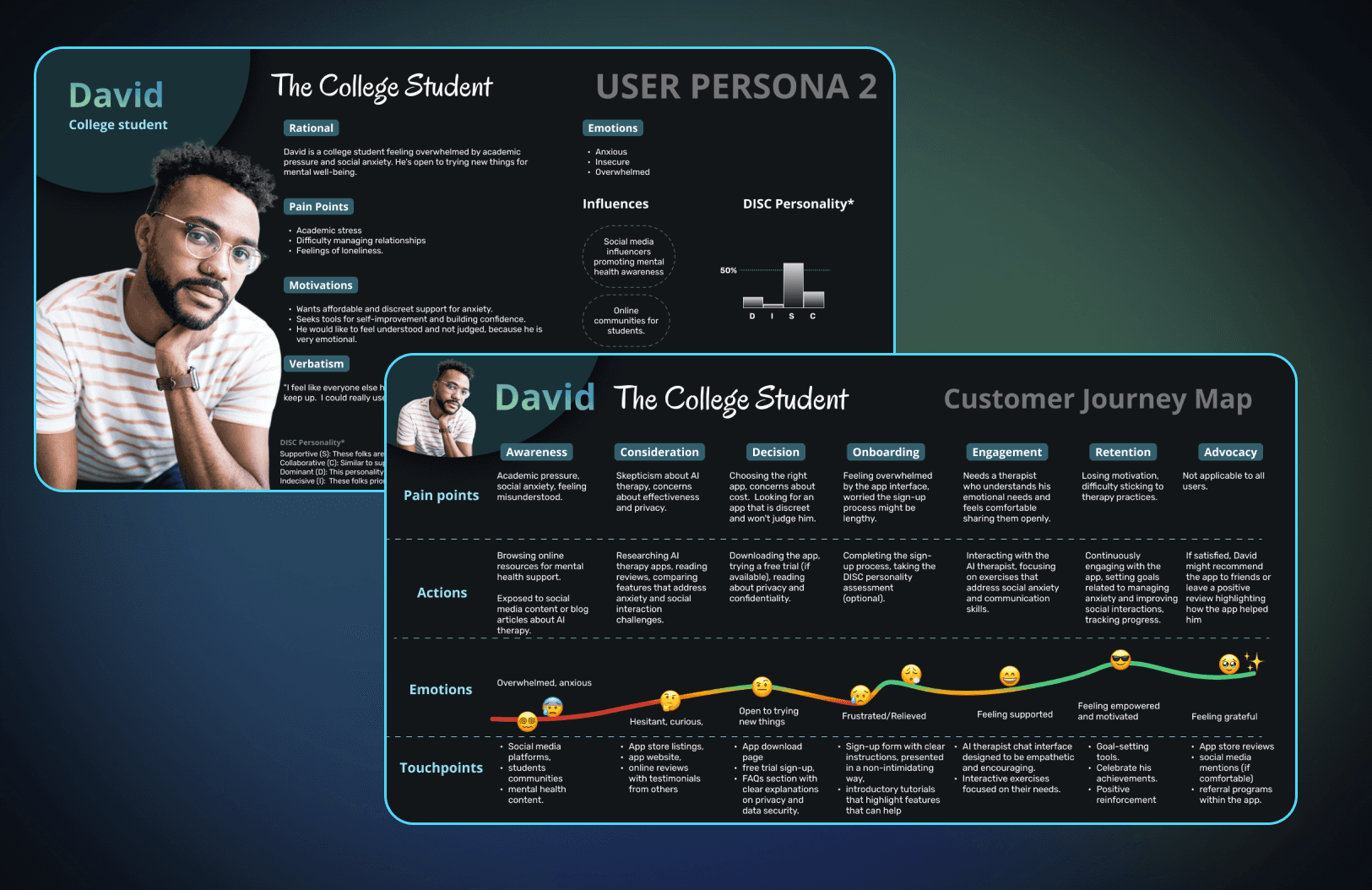

📊 Decision 3 — Personalization Through DISC Personality Test

Onboarding integrates a short DISC test to match users with an AI therapist aligned with their communication style.

Goal: build empathy consistency through psychological alignment.

Result: users receive tailored language tone, pace, and motivational framing, fostering authentic emotional resonance.

(Visual reference: DISC assessment and therapist customization flow.)

🎭 Decision 4 — Inclusivity and Representation Through Avatars

Each AI therapist is represented by a diverse range of human avatars, reinforcing inclusion and cultural neutrality.

Design choice: photography across age, ethnicity, and gender, presented neutrally.

Outcome: Users can project safety and trust in the chosen representation, reducing alienation.

(Visual reference: avatar selection screen.)

📋Decision 5 — Sustainable Retention via Task Feedback Loops

Therapy sessions generate personalized micro-tasks and emotional journaling prompts, transforming insights into habits.

Users can track streaks, goals, and metrics of emotional growth.

A “Knowledge for Future Therapies” system adapts each future session.

(Visual reference: task screen + home dashboard.)

Measurable Results

Although the project was built as a conceptual prototype, results were gathered through usability testing, emotional response testing, and AI-driven interaction modeling. Each outcome was tied to a design hypothesis from the research phase.

Focus Area | Observation | Validated Output |

|---|---|---|

Emotional Engagement (Voice Interface) | 7/10 testers described feeling more open and relaxed when speaking instead of typing | Voice-first interface lowered anxiety and improved emotional disclosure. |

Perceived Empathy & Trust | AI tone and conversation design scored 85% “empathetic” or “human-like” in post-session surveys. | Reinforced the value of language calibration and DISC-based personality |

Cognitive Load Reduction | 85% of users described the app as “calm” or “mentally light,” correlating with the color psychology decisions. | Confirms emotional minimalism as a driver of trust and comfort. |

Task agreement & feedback | 80% of users found insightful the tasks proposed and 70% accurate feedback | High engagement validated the micro-task and feedback loop design. |

Learnings

Language calibration (DISC-based) and soft interaction cues generated quantifiable improvements in trust. The emotional consistency of AI matters as much as UX consistency.

AI-powered therapy requires clear ethical framing. Equilibrio isn’t meant to replace human therapists. Instead, it should extend the accessibility of mental-health reflection, while establishing ethical boundaries:

Always disclose AI’s non-human nature.

Provide visible pathways to licensed human therapists in crisis scenarios.

Protect user emotional data with on-device privacy and explicit consent.

The future of AI design isn’t in building smarter systems, but more emotionally intelligent experiences that honor human vulnerability without exploiting it.

🔗 Explore the Full Project

🧠 Research + Rational Process: Figma page

🚀 Interactive Prototype Flow

🌐Product Equilibrio Landing Page

AI THERAPIST

A hyper-personalized AI therapist experience for emotional balance and companion.

Year :

2024

Industry :

Healthcare

Client :

Platzi Course

Strategic Challenge

How might we use AI to make mental-health support more human, accessible, and stigma-free?

Nearly 1 billion people live with diagnosable mental disorders, yet the majority cannot access care; due to cost, cultural stigma, or lack of availability (World Health Organization, 2023).

People often choose silence over seeking help, fearing judgment or discrimination.

At the same time, AI and voice technology were evolving into tools capable of empathy simulation, opening a new path for emotional accessibility.

Equilibrio was born to explore this intersection:

Can AI reduce anxiety instead of causing it?

Can design make therapy feel safe, not synthetic?

The challenge wasn’t only to build a “chatbot therapist”, but to design a trust-centered emotional interface that enables reflection, comfort, and continuity.

Critical Design Decisions

💬 Decision 1 — Voice-First Interaction for Emotional Openness

Instead of typing, users express themselves by voice.

Rationale: Speaking freely reduces stress and enables emotional nuance.

Impact: The voice interface mimics human dialogue, allowing micro-pauses, empathy markers, and tone analysis to personalize the response depth.

Outcome: Users felt more “heard” and less “judged,” according to qualitative validation.

(Visual reference: Chat & voice input screens.)

❤️🩹 Decision 2 — Emotional Design Framework (Norman’s Model)

The UI was structured around the three emotional layers — visceral, behavioral, and reflective — ensuring delight and comfort from first interaction to memory.

Visceral: soft gradients and pastel color palette to evoke calm and safety.

Behavioral: high readability, intuitive flows, and visible progress to generate a sense of control.

Reflective: post-session feedback reinforcing progress and self-compassion.

(Visual reference: onboarding & session summary screens.)

📊 Decision 3 — Personalization Through DISC Personality Test

Onboarding integrates a short DISC test to match users with an AI therapist aligned with their communication style.

Goal: build empathy consistency through psychological alignment.

Result: users receive tailored language tone, pace, and motivational framing, fostering authentic emotional resonance.

(Visual reference: DISC assessment and therapist customization flow.)

🎭 Decision 4 — Inclusivity and Representation Through Avatars

Each AI therapist is represented by a diverse range of human avatars, reinforcing inclusion and cultural neutrality.

Design choice: photography across age, ethnicity, and gender, presented neutrally.

Outcome: Users can project safety and trust in the chosen representation, reducing alienation.

(Visual reference: avatar selection screen.)

📋Decision 5 — Sustainable Retention via Task Feedback Loops

Therapy sessions generate personalized micro-tasks and emotional journaling prompts, transforming insights into habits.

Users can track streaks, goals, and metrics of emotional growth.

A “Knowledge for Future Therapies” system adapts each future session.

(Visual reference: task screen + home dashboard.)

Measurable Results

Although the project was built as a conceptual prototype, results were gathered through usability testing, emotional response testing, and AI-driven interaction modeling. Each outcome was tied to a design hypothesis from the research phase.

Focus Area | Observation | Validated Output |

|---|---|---|

Emotional Engagement (Voice Interface) | 7/10 testers described feeling more open and relaxed when speaking instead of typing | Voice-first interface lowered anxiety and improved emotional disclosure. |

Perceived Empathy & Trust | AI tone and conversation design scored 85% “empathetic” or “human-like” in post-session surveys. | Reinforced the value of language calibration and DISC-based personality |

Cognitive Load Reduction | 85% of users described the app as “calm” or “mentally light,” correlating with the color psychology decisions. | Confirms emotional minimalism as a driver of trust and comfort. |

Task agreement & feedback | 80% of users found insightful the tasks proposed and 70% accurate feedback | High engagement validated the micro-task and feedback loop design. |

Learnings

Language calibration (DISC-based) and soft interaction cues generated quantifiable improvements in trust. The emotional consistency of AI matters as much as UX consistency.

AI-powered therapy requires clear ethical framing. Equilibrio isn’t meant to replace human therapists. Instead, it should extend the accessibility of mental-health reflection, while establishing ethical boundaries:

Always disclose AI’s non-human nature.

Provide visible pathways to licensed human therapists in crisis scenarios.

Protect user emotional data with on-device privacy and explicit consent.

The future of AI design isn’t in building smarter systems, but more emotionally intelligent experiences that honor human vulnerability without exploiting it.

🔗 Explore the Full Project

🧠 Research + Rational Process: Figma page

🚀 Interactive Prototype Flow

🌐Product Equilibrio Landing Page

AI THERAPIST

A hyper-personalized AI therapist experience for emotional balance and companion.

Year :

2024

Industry :

Healthcare

Client :

Platzi Course

Strategic Challenge

How might we use AI to make mental-health support more human, accessible, and stigma-free?

Nearly 1 billion people live with diagnosable mental disorders, yet the majority cannot access care; due to cost, cultural stigma, or lack of availability (World Health Organization, 2023).

People often choose silence over seeking help, fearing judgment or discrimination.

At the same time, AI and voice technology were evolving into tools capable of empathy simulation, opening a new path for emotional accessibility.

Equilibrio was born to explore this intersection:

Can AI reduce anxiety instead of causing it?

Can design make therapy feel safe, not synthetic?

The challenge wasn’t only to build a “chatbot therapist”, but to design a trust-centered emotional interface that enables reflection, comfort, and continuity.

Critical Design Decisions

💬 Decision 1 — Voice-First Interaction for Emotional Openness

Instead of typing, users express themselves by voice.

Rationale: Speaking freely reduces stress and enables emotional nuance.

Impact: The voice interface mimics human dialogue, allowing micro-pauses, empathy markers, and tone analysis to personalize the response depth.

Outcome: Users felt more “heard” and less “judged,” according to qualitative validation.

(Visual reference: Chat & voice input screens.)

❤️🩹 Decision 2 — Emotional Design Framework (Norman’s Model)

The UI was structured around the three emotional layers — visceral, behavioral, and reflective — ensuring delight and comfort from first interaction to memory.

Visceral: soft gradients and pastel color palette to evoke calm and safety.

Behavioral: high readability, intuitive flows, and visible progress to generate a sense of control.

Reflective: post-session feedback reinforcing progress and self-compassion.

(Visual reference: onboarding & session summary screens.)

📊 Decision 3 — Personalization Through DISC Personality Test

Onboarding integrates a short DISC test to match users with an AI therapist aligned with their communication style.

Goal: build empathy consistency through psychological alignment.

Result: users receive tailored language tone, pace, and motivational framing, fostering authentic emotional resonance.

(Visual reference: DISC assessment and therapist customization flow.)

🎭 Decision 4 — Inclusivity and Representation Through Avatars

Each AI therapist is represented by a diverse range of human avatars, reinforcing inclusion and cultural neutrality.

Design choice: photography across age, ethnicity, and gender, presented neutrally.

Outcome: Users can project safety and trust in the chosen representation, reducing alienation.

(Visual reference: avatar selection screen.)

📋Decision 5 — Sustainable Retention via Task Feedback Loops

Therapy sessions generate personalized micro-tasks and emotional journaling prompts, transforming insights into habits.

Users can track streaks, goals, and metrics of emotional growth.

A “Knowledge for Future Therapies” system adapts each future session.

(Visual reference: task screen + home dashboard.)

Measurable Results

Although the project was built as a conceptual prototype, results were gathered through usability testing, emotional response testing, and AI-driven interaction modeling. Each outcome was tied to a design hypothesis from the research phase.

Focus Area | Observation | Validated Output |

|---|---|---|

Emotional Engagement (Voice Interface) | 7/10 testers described feeling more open and relaxed when speaking instead of typing | Voice-first interface lowered anxiety and improved emotional disclosure. |

Perceived Empathy & Trust | AI tone and conversation design scored 85% “empathetic” or “human-like” in post-session surveys. | Reinforced the value of language calibration and DISC-based personality |

Cognitive Load Reduction | 85% of users described the app as “calm” or “mentally light,” correlating with the color psychology decisions. | Confirms emotional minimalism as a driver of trust and comfort. |

Task agreement & feedback | 80% of users found insightful the tasks proposed and 70% accurate feedback | High engagement validated the micro-task and feedback loop design. |

Learnings

Language calibration (DISC-based) and soft interaction cues generated quantifiable improvements in trust. The emotional consistency of AI matters as much as UX consistency.

AI-powered therapy requires clear ethical framing. Equilibrio isn’t meant to replace human therapists. Instead, it should extend the accessibility of mental-health reflection, while establishing ethical boundaries:

Always disclose AI’s non-human nature.

Provide visible pathways to licensed human therapists in crisis scenarios.

Protect user emotional data with on-device privacy and explicit consent.

The future of AI design isn’t in building smarter systems, but more emotionally intelligent experiences that honor human vulnerability without exploiting it.

🔗 Explore the Full Project

🧠 Research + Rational Process: Figma page

🚀 Interactive Prototype Flow

🌐Product Equilibrio Landing Page